Analyzing Reactions to Political Debates from Tweets

Sentiment analysis is the process of interpreting, classifying, and quantifying subjectivity within text data. This process is mainly used by companies to gauge consumers’ feelings towards brands, products, and services in online discourse. In various fields, sentiment analysis provides an objective way of collecting, reporting, and analyzing content that would otherwise be biased.

Political science is one such field in which sentiment analysis is extremely useful. When running campaigns, advocating for or against bills and laws, or simply serving their terms, politicians use sentiment analysis to gauge public reception of themselves. Data collected allows politicians to see which people are struggling the most, strategize their next moves, and stay ahead of their opponents. However, politicians may only use sentiment analysis on biased platforms, leading to data that aren’t representative of the general public. This behavior often leads to corruption and misinformation.

To accurately tell how the general public feels about politicians, we’ll be using Twitter to gather data about candidates in the 7th Democratic Debate of the 2020 U.S. Presidential Election. Twitter was chosen not only for its diverse user population, but also for the straightforward nature of users’ posts, or Tweets. All users must convey their entire message within the 280-character limit. This unique feature is a reliable indicator of sentiment across all areas, but is especially notable in politics. On Twitter, politicians must express all of their opinions, updates, and other important information in as few words as possible. Twitter’s features, diverse audience, and the volatile nature of politics combined yield data ideal for finding out the true feelings of a select group of people.

This tutorial will teach you how to do the following:

Use an API, or tool set that specifies how software components should interact to yield certain results, developed by Twitter to collect data from a set number of tweets from the past

Use “TextBlob,” a Python library for processing textual data that provides an API for natural language processing (NLP) tasks

Use “Tweepy,” another Python library that grants access to the Twitter API and supports streamline automation and bot creation

Properly quantify and analyze emotions extracted from data

Create a stacked bar graph to visualize the data you’ve collected

Draw accurate conclusions about your data

Tutorial

Getting Started

We will be using the Python Library TextBlob to conduct sentiment analysis on our dataset. The TextBlob library provides an API for sentiment analysis, tokenization, classification, and other simple NLP features. In order to get started with the TextBlob library, we need to install it. Open your terminal and type in the following:

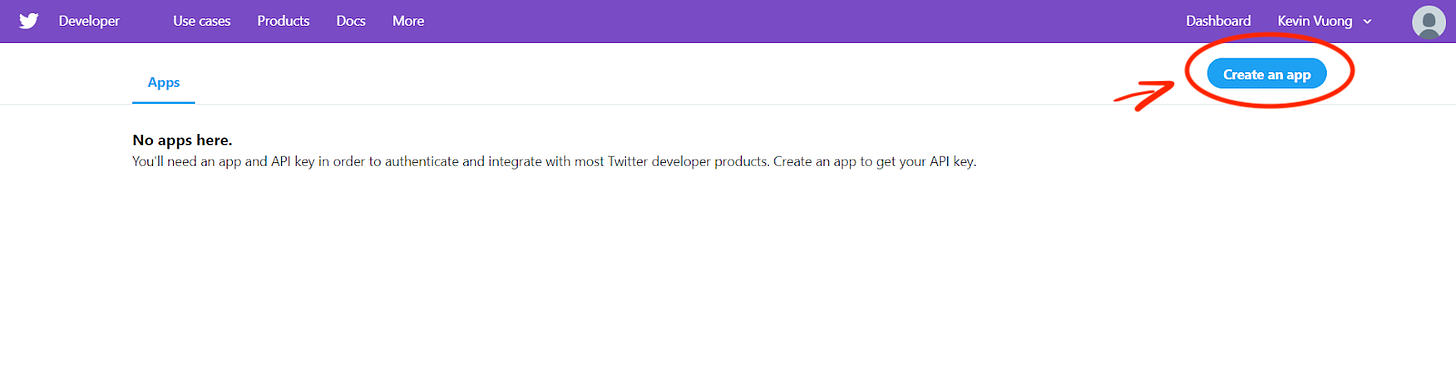

$ pip install -U textblob $ python -m textblob.download_corpora Additionally, we need to have a Twitter Developer account in order to obtain the necessary keys to access the API. Once logged into Twitter, click on the blue "Create an App" button in the top right corner. Fill out the app details.

Twitter Developer Portal

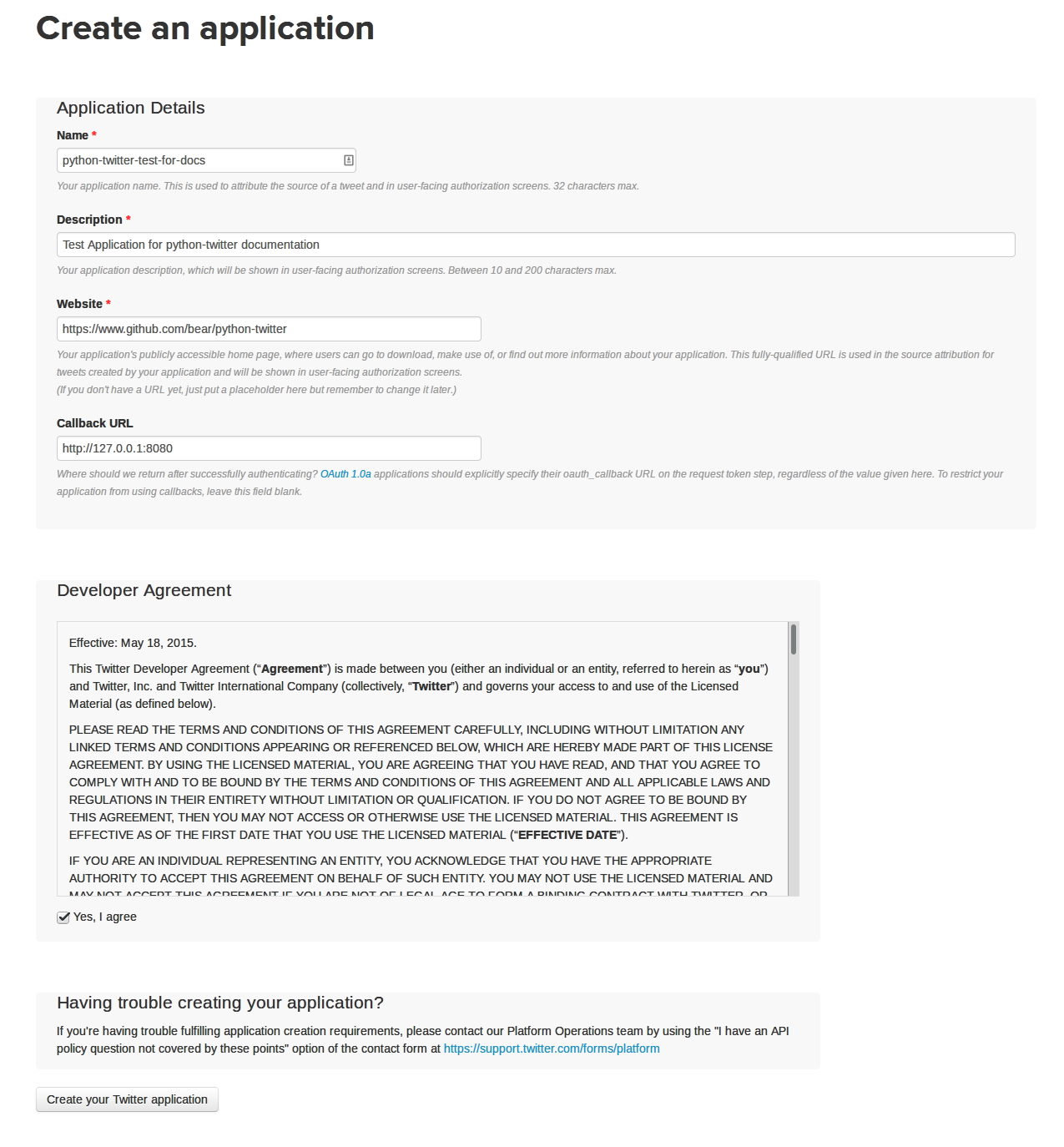

For this tutorial, you can input any website into the website URL field (we used https://bitproject.org), and leave the OAuth Callback URL, TOS, and Privacy Policy fields blank.

Create an App Form

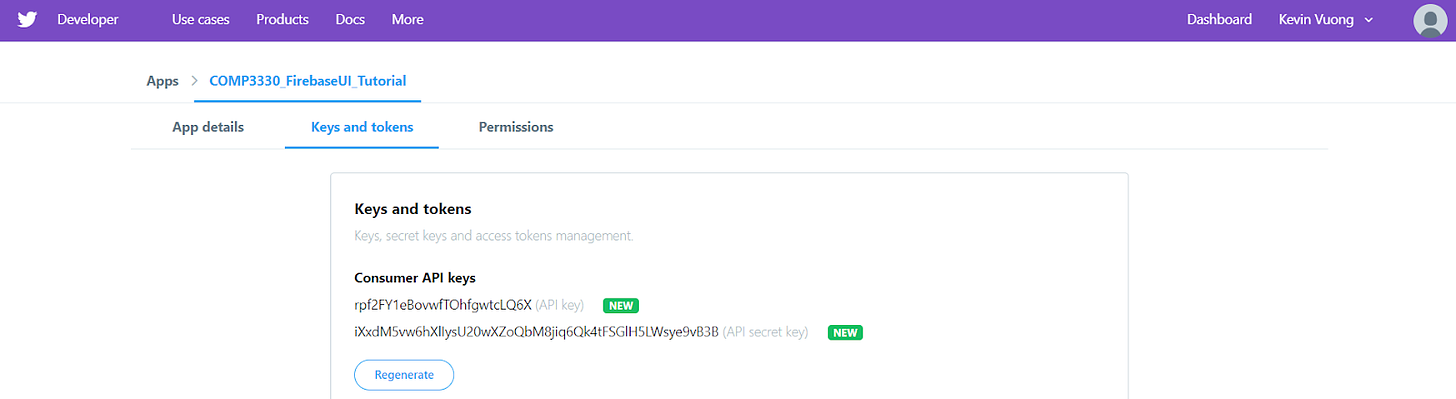

After creating our app, navigating to the "Keys and Tokens" tokens tab will expose the API key and the API secret key. Generate both the access token and secret access token on the same portal as well.

Exposing your Auth tokens

Lastly, install the Tweepy library:

$ pip install tweepy Next, Please also download other libraries that we will be using: Pandas, Matplotlib, numpy and scipy if you haven’t already. Then import OS, Tweepy, Pandas, Numpy, matplotlib.pyplot, and Scipy at the top of your program.

Importing Our Python Modules

We are going to import our installed Python libraries as the following:

import os import tweepy as tw import pandas as pd import numpy as np import matplotlib.pyplot as plt from scipy import stats from textblob import TextBlob # keys and tokens from the Twitter Dev Console from tweepy import OAuthHandler from IPython.display import display, HTML Now, we can create instances of these modules as we continue.

Accessing the API

With the access keys, we are going to create a Tweepy API object to fetch and analyze our Tweets.

Place your generated credentials in variables for your Python program so that they’re easily accessible and significantly shorter than your token:

consumer_key = 'xxx' consumer_secret = 'xxx' access_token = 'xxx' access_token_secret = 'xxx' Then, we’re going to use OAuth to generate an authentication object using our consumer key and secret:

auth = OAuthHandler(consumer_key, consumer_secret) Next, we are going to access to our authentication object with our access token and access secret token:

auth.set_access_token(access_token, access_token_secret) Now, we can finally create our API object using Tweepy's API function:

api = tw.API(auth, wait_on_rate_limit=True) Creating a Data Frame

We need to create a data frame to manage and plot our data. To do this, we will use the Pandas library.

Our end result is going to be a dataframe indexed by sentiment categories ['positive', 'neutral', 'negative'] and our columns being names of various political candidates. From this dataframe, one should be able to easily look up the number of positive/neutral/negative tweets regarding a candidate.

Firstly, make an empty dataframe df indexed by the array ['positive', 'neutral', 'negative'].

The index of our data frame will be “positive,” “neutral,” and “negative,” as those are the results that the TextBlob sentiment analysis API will return:

df = pd.DataFrame([], index=['positive', 'neutral', 'negative']) dem_search simply is a dictionary with candidate names mapped to appropriate search queries on Twitter. We can use tweepy to search for tweets including those search queries.

For each search query, use the Cursor object from tweepy to generate n number of tweets including each query. (n corresponds to the parameter num_tweets in this case.)

The cursor in Tweepy will allow us to find num_tweets Tweets given a search query val.

# Collect tweets tweets = tw.Cursor(api.search, q=val, lang="en", since=date_since).items(num_tweets) Because we are given a dictionary with search queries, we want to iterate through it and call the above line for each search query (each query corresponds to one candidate):

for key, val in search_dict.items(): # Collect tweets tweets = tw.Cursor(api.search, q=val, lang="en", since=date_since).items(num_tweets) Using TextBlob to Generate Sentiment Scores

For each Tweet object in the cursor, we can use the .text attribute to get the Tweet’s text itself. We will make a TextBlob object out of each text.

From there, simply check the values of the .sentiment.polarity attribute. Positive polarity indicates positive sentiment, zero polarity indicates neutral sentiment, and neutral polarity indicates negative sentiment.

We keep track of positive, neutral, and negative tweets with counter variables. At the end, we add the acquired sentiment data to the data frame.

Return df at the end.

for key, val in search_dict.items(): # ... positive = 0 neutral = 0 negative = 0 for t in tweets: analysis = TextBlob(t.text) if analysis.sentiment.polarity > 0: positive += 1 elif analysis.sentiment.polarity == 0: neutral += 1 else: negative += 1 df[key] = [positive, neutral, negative] return df Now, we are going to populate the data frame. To do this, call produce_dataframe() once with keywords dem_search with 1000 tweets as the parameter.

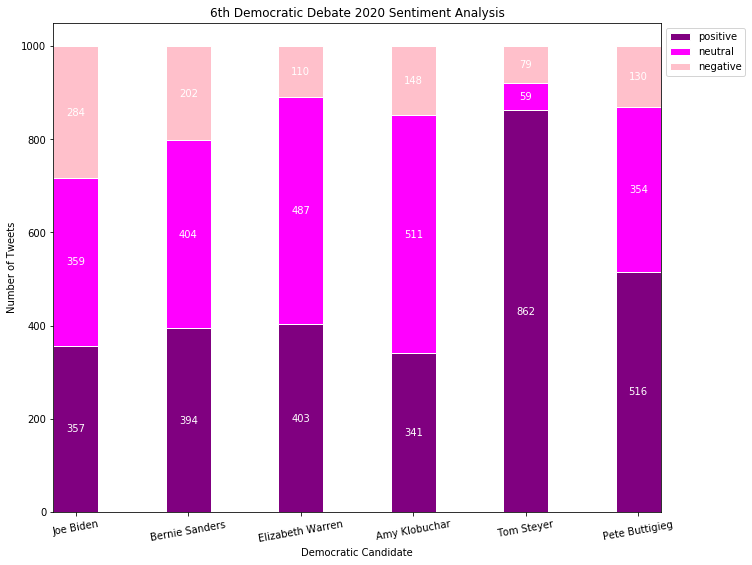

# dem debate words dem_search = {"Joe Biden": "joe biden", "Bernie Sanders": "bernie", "Elizabeth Warren": "elizabeth warren", "Amy Klobuchar": "klobuchar", "Tom Steyer": "tom steyer", "Pete Buttigieg": "buttigieg"} date_since = "2020-01-14" dem_df = produce_dataframe(dem_search, 1000) dem_df Setting Up the Graph

Even though this is a stacked bar graph, you are still graphing numbers on a bar graph with the caveat that the bars are on top of each other. Thus, the location of the bars needs to be controlled.

To make the bars on your graph, there are two steps you need to take:

* Locate the positive, neutral, and negative lists in your data frame. That is the data you will be graphing.

* Use “plt.bar” to graph the bar graphs.

* Start off by graphing the positive bars and making sure they work.

* Then, graph the neutral bars, setting the bottom to be the positive bars.

* Do the same for the negative bars.

Now that your bars are set up, your graph most likely looks unorganized. To organize our graph, we can set margins to the x ticks to space them out. Because the specifics of how to space out x tick labels can get quite complicated for this boot camp, I'll provide a chunk of code for you here:

# space out x ticks and give margins plt.gca().margins(x=0) plt.gcf().canvas.draw() tl = plt.gca().get_xticklabels() maxsize = max([t.get_window_extent().width for t in tl]) m = 0.1 # inch margin s = maxsize/plt.gcf().dpi*7+2*m margin = m/plt.gcf().get_size_inches()[0] plt.gcf().subplots_adjust(left=margin, right=1.-margin) plt.gcf().set_size_inches(s, plt.gcf().get_size_inches()[1]) plt.title("6th Democratic Debate 2020 Sentiment Analysis") This code should space out your x ticks and set margins.

Don't forget a title (`plt.title`) and a legend (`plt.legend`)!

To add text labels, we'll have to iterate through all the bars and append an appropriate label to each one. First, set up a list called labels All of the positive, neutral, and negative data should be in one list.

We can use ax.patches to find a list of all the bars currently in the graph. We can then zip the labels and patches together, iterate through that, and for each patch use ax.text to attribute a label to each bar. Make sure you have an appropriate location when using ax.text!

Seeing Your Graph

It's time to put all of our functions together into a program! Call produce_dataframe() and produce_graph inside of your main() function with the proper parameters, and run your main() to see your completed graphs!

Example bar graph

Conclusion

With the help of APIs in sentiment analysis, we can draw objective, accurate conclusions from the data we collect in a labor-efficient and structured way. The categorization of emotions from key words and phrases lets us analyze them apart from external factors like biases and hidden search results. In politics, sentiment analysis performed on diverse platforms like Twitter not only provides reliable results, but also reduces the likelihood of corruption. Ultimately, this increases transparency, making for a well-informed citizen body and mutual trust between politicians and their supporters.

You can apply what you learned about APIs and sentiment analysis to other areas of life as well. For instance, if you’re conducting research in the social sciences, you can use APIs to gather a large amount of data from a sample or population in a very short time. You can then apply your sentiment analysis knowledge to properly draw conclusions from the data you collected. APIs such as the ones developed by Twitter are also widely available as open-source tools online with a developer account. This ease of use allows anyone to conduct their desired research, whether it’s to establish personal brand identity, find out the true feelings an audience has about certain businesses, or simply for fun.